If the messages are processed by a single pool of consumers, you have to provide a mechanism that can preempt and suspend a task that's handling a low priority message if a higher priority message enters the queue. Identify the requirements for handling high priority items, and the resources that need to be allocated to meet your criteria.ĭecide whether all high priority items must be processed before any lower priority items. For example, a high priority message could be defined as a message that should be processed within 10 seconds. For example, you can prioritize critical tasks so that they're handled by receivers that run immediately, and less important background tasks can be handled by receivers that are scheduled to run at times that are less busy.Ĭonsider the following points when you decide how to implement this pattern:ĭefine the priorities in the context of the solution. The multiple message queue approach can help maximize application performance and scalability by partitioning messages based on processing requirements. You can even suspend processing for some very low priority queues by stopping all the consumers that listen for messages on those queues. If you implement the multiple message queue approach with separate pools of consumers for each queue, you can reduce the pool of consumers for lower priority queues.

High priority messages are still processed first (although possibly more slowly), and lower priority messages might be delayed for longer.

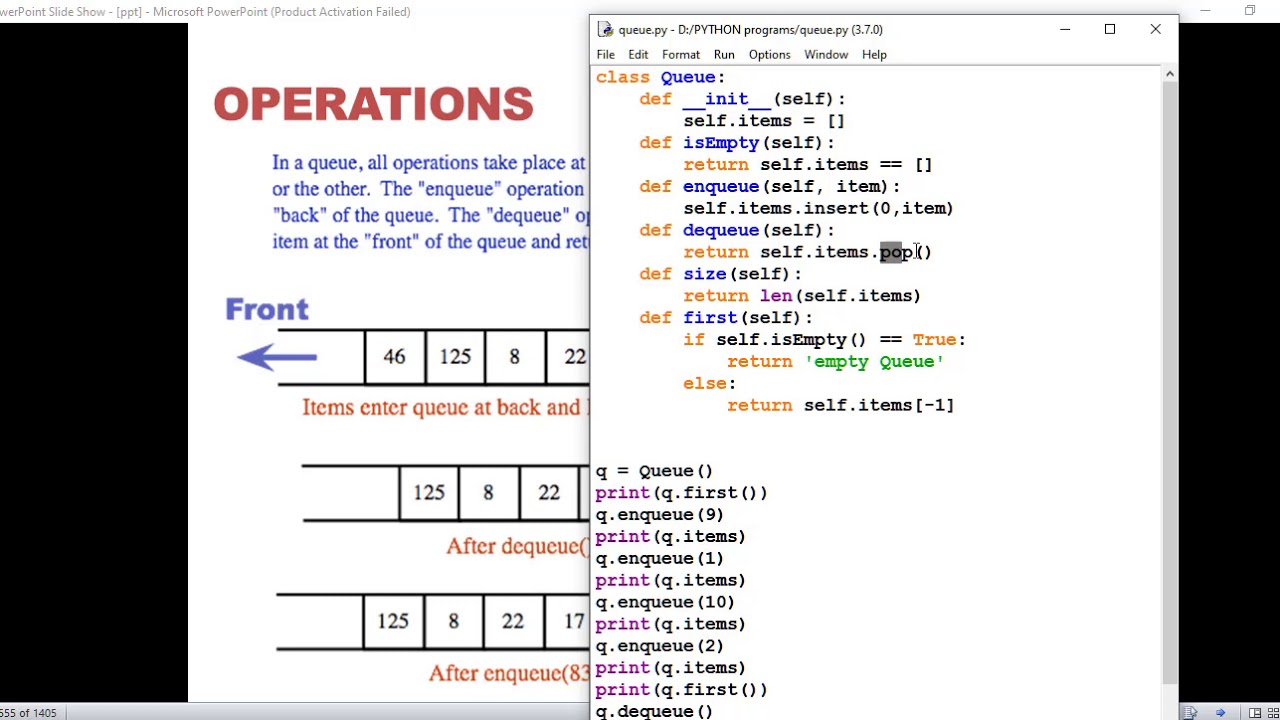

If you use the single-queue approach, you can scale back the number of consumers if you need to. It can help to minimize operational costs. It allows applications to meet business requirements that require the prioritization of availability or performance, such as offering different levels of service to different groups of customers. Using a priority-queuing mechanism can provide the following advantages: In the multiple pool approach, lower priority messages are always processed, but not as quickly as higher priority messages (depending on the relative size of the pools and the resources that are available for them). In theory, low priority messages could be continually superseded and might never be processed. In the single-pool approach, higher priority messages are always received and processed before lower priority messages. There are some semantic differences between a solution that uses a single pool of consumer processes (either with a single queue that supports messages that have different priorities or with multiple queues that each handle messages of a single priority), and a solution that uses multiple queues with a separate pool for each queue. This diagram illustrates the use of separate message queues for each priority:Ī variation on this strategy is to implement a single pool of consumers that check for messages on high priority queues first, and only after that start to fetch messages from lower priority queues. Higher priority queues can have a larger pool of consumers that run on faster hardware than lower priority queues. Each queue can have a separate pool of consumers. The application is responsible for posting messages to the appropriate queue. In systems that don't support priority-based message queues, an alternative solution is to maintain a separate queue for each priority. (See the Competing Consumers pattern.) The number of consumer processes can be scaled up and down based on demand. Most message queue implementations support multiple consumers.

The messages in the queue are automatically reordered so that those that have a higher priority are received before those that have a lower priority. The application that's posting a message can assign a priority. However, some message queues support priority messaging. SolutionĪ queue usually is a first-in, first-out (FIFO) structure, and consumers typically receive messages in the same order that they're posted to the queue. These requests should be processed earlier than lower priority requests that were previously sent by the application. In some cases, though, it's necessary to prioritize specific requests. In many cases, the order in which requests are received by a service isn't important. In the cloud, a message queue is typically used to delegate tasks to background processing. Context and problemĪpplications can delegate specific tasks to other services, for example, to perform background processing or to integrate with other applications or services. This pattern is useful in applications that offer different service level guarantees to individual clients. Prioritize requests sent to services so that requests with a higher priority are received and processed more quickly than those with a lower priority.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed